Salesforce Certified MuleSoft Platform Architect (Mule-Arch-201) Exam Questions

- Topic 1: Explaining Application Network Basics: This topic includes subtopics related to identifying and differentiating between technologies for API-led connectivity, describing the role and characteristics of web APIs, assigning APIs to tiers, and understanding Anypoint Platform components.

- Topic 2: Establishing Organizational and Platform Foundations: Advising on a Center for Enablement (C4E) and identifying KPIs, describing MuleSoft Catalyst's structure, comparing Identity and Client Management options, and identifying data residency types are essential subtopics.

- Topic 3: Designing and Sharing APIs: Identifying dependencies between API components, creating and publishing reusable API assets, mapping API data models between Bounded Contexts, and recognizing idempotent HTTP methods.

- Topic 4: Designing APIs Using System, Process, and Experience Layers: Identifying suitable APIs for business processes, assigning them according to functional focus, and recommending data model approaches are its subtopics.

- Topic 5: Governing Web APIs on Anypoint Platform: This topic includes subtopics related to managing API instances and environments, selecting API policies, enforcing API policies, securing APIs, and understanding OAuth 2.0 relationships.

- Topic 6: Architecting and Deploying API Implementations: It covers important aspects like using auto-discovery, identifying VPC requirements, comparing hosting options, and understanding testing methods. The topic also involves automated building, testing, and deploying in a DevOps setting.

- Topic 7: Deploying API Implementations to CloudHub: Understanding Object Store usage, selecting worker sizes, predicting app reliability and performance, and comparing load balancers. Avoiding single points of failure in deployments is also its sub-topic.

- Topic 8: Meeting API Quality Goals: This topic focuses on designing resilience strategies, selecting appropriate caching and OS usage scenarios, and describing horizontal scaling benefits.

- Topic 9: Monitoring and Analyzing Application Networks: It discusses Anypoint Platform components for data generation, collected metrics, and key alerts. This topic also includes specifying alerts to define Mule applications.

Free Salesforce Salesforce Certified MuleSoft Platform Architect (Mule-Arch-201) Exam Actual Questions

Note: Premium Questions for Salesforce Certified MuleSoft Platform Architect (Mule-Arch-201) were last updated On Apr. 15, 2026 (see below)

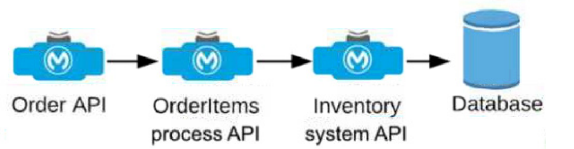

An Order API triggers a sequence of other API calls to look up details of an order's items in a back-end inventory database. The Order API calls the OrderItems process API, which calls the Inventory system API. The Inventory system API performs database operations in the back-end inventory database.

The network connection between the Inventory system API and the database is known to be unreliable and hang at unpredictable times.

Where should a two-second timeout be configured in the API processing sequence so that the Order API never waits more than two seconds for a response from the Orderltems process API?

Understanding the API Flow and Timeout Requirement:

The Order API initiates a call to the OrderItems process API, which in turn calls the Inventory system API to fetch details from the inventory database.

The requirement specifies that the Order API should not wait more than two seconds for a response from the OrderItems process API, even if there are delays further down the chain (between Inventory system API and the database).

Choosing the Appropriate Timeout Location:

Setting the timeout at the OrderItems process API level ensures that if the Inventory system API takes longer than two seconds to respond, the OrderItems process API will terminate the request and send a timeout response back to the Order API. This prevents the Order API from waiting indefinitely due to the unreliable connection to the database.

If the timeout were set in the Inventory system API or database, it would not help the Order API directly, as the OrderItems process API would still be waiting for a response.

Detailed Analysis of Each Option:

Option A (Correct Answer): Setting the timeout in the OrderItems process API allows it to control how long it waits for a response from the Inventory system API. If the Inventory system API does not respond within two seconds, the OrderItems process API can terminate the call and return a timeout response to the Order API, meeting the requirement.

Option B: Setting the timeout in the Order API would not limit the wait time at the OrderItems process API level, meaning the OrderItems process API could still wait indefinitely for the Inventory system API, leading to a longer delay.

Option C: Setting the timeout in the Inventory system API only affects the connection to the database and does not influence how long the OrderItems process API waits for the Inventory system API's response.

Option D: Setting a timeout in the database is not feasible in this context since database timeouts are typically configured for database operations and would not directly control the API response times in the overall API chain.

Conclusion:

Option A is the best choice, as it ensures that the OrderItems process API does not hold the Order API longer than the required two seconds, even if the downstream connection to the database hangs. This configuration aligns with MuleSoft best practices for setting timeouts in API orchestration to manage dependencies and prevent delays across a chain of API calls.

For additional information on timeout settings, refer to MuleSoft documentation on handling timeouts and API orchestration best practices.

A company deploys Mule applications with default configurations through Runtime Manager to customer-hosted Mule runtimes. Each Mule application is an API

implementation that exposes RESTful interfaces to API clients. The Mule runtimes are managed by the MuleSoft-hosted control plane. The payload is never used by any Logger

components.

When an API client sends an HTTP request to a customer-hosted Mule application, which metadata or data (payload) is pushed to the MuleSoft-hosted control plane?

Understanding the Data Flow Between Mule Runtimes and Control Plane:

When Mule applications are deployed on customer-hosted Mule runtimes, the MuleSoft-hosted control plane (Anypoint Platform) can monitor and manage these applications. However, due to data privacy and security, the control plane only collects specific types of information.

Typically, only metadata about the request and response (such as headers, status codes, and timestamps) is sent to the MuleSoft-hosted control plane. The actual payload data is not transmitted unless explicitly configured, ensuring that sensitive data remains within the customer's network.

Evaluating the Options:

Option A (Only the data): This is incorrect because the payload data itself is not automatically sent to the control plane in default configurations.

Option B (No data): This is incorrect as well; while the payload is not sent, metadata is still collected and sent to the control plane.

Option C (The data and metadata): This option is incorrect because data (payload) is not transmitted to the control plane by default.

Option D (Correct Answer): Only the metadata is sent to the MuleSoft-hosted control plane by default, aligning with MuleSoft's design to prioritize security and data privacy for customer-hosted runtimes.

Conclusion:

Option D is the correct answer, as by default, only metadata is sent to the MuleSoft-hosted control plane, and not the payload. This configuration is designed to protect sensitive data from being exposed outside the customer's hosted environment.

For more details, refer to MuleSoft's documentation on telemetry data collected in customer-hosted Mule runtimes and the MuleSoft control plane.

An organization is implementing a Quote of the Day API that caches today's quote.

What scenario can use the GoudHub Object Store via the Object Store connector to persist the cache's state?

Correct Answe r: When there is one CloudHub deployment of the API implementation to three CloudHub workers that must share the cache state.

*****************************************

Key details in the scenario:

>> Use the CloudHub Object Store via the Object Store connector

Considering above details:

>> CloudHub Object Stores have one-to-one relationship with CloudHub Mule Applications.

>> We CANNOT use an application's CloudHub Object Store to be shared among multiple Mule applications running in different Regions or Business Groups or Customer-hosted Mule Runtimes by using Object Store connector.

>> If it is really necessary and very badly needed, then Anypoint Platform supports a way by allowing access to CloudHub Object Store of another application using Object Store REST API. But NOT using Object Store connector.

So, the only scenario where we can use the CloudHub Object Store via the Object Store connector to persist the cache's state is when there is one CloudHub deployment of the API implementation to multiple CloudHub workers that must share the cache state.

An organization wants to make sure only known partners can invoke the organization's APIs. To achieve this security goal, the organization wants to enforce a Client ID Enforcement policy in API Manager so that only registered partner applications can invoke the organization's APIs. In what type of API implementation does MuleSoft recommend adding an API proxy to enforce the Client ID Enforcement policy, rather than embedding the policy directly in the application's JVM?

Correct Answe r: A Non-Mule application

*****************************************

>> All type of Mule applications (Mule 3/ Mule 4/ with APIkit/ with Custom Java Code etc) running on Mule Runtimes support the Embedded Policy Enforcement on them.

>> The only option that cannot have or does not support embedded policy enforcement and must have API Proxy is for Non-Mule Applications.

So, Non-Mule application is the right answer.

The implementation of a Process API must change.

What is a valid approach that minimizes the impact of this change on API clients?

Correct Answe r: Implement required changes to the Process API implementation so that, whenever possible, the Process API's RAML definition remains unchanged.

*****************************************

Key requirement in the question is:

>> Approach that minimizes the impact of this change on API clients

Based on above:

>> Updating the RAML definition would possibly impact the API clients if the changes require any thing mandatory from client side. So, one should try to avoid doing that until really necessary.

>> Implementing the changes as a completely different API and then redirectly the clients with 3xx status code is really upsetting design and heavily impacts the API clients.

>> Organisations and IT cannot simply postpone the changes required until all API consumers acknowledge they are ready to migrate to a new Process API or API version. This is unrealistic and not possible.

The best way to handle the changes always is to implement required changes to the API implementations so that, whenever possible, the API's RAML definition remains unchanged.

- Select Question Types you want

- Set your Desired Pass Percentage

- Allocate Time (Hours : Minutes)

- Create Multiple Practice tests with Limited Questions

- Customer Support

Currently there are no comments in this discussion, be the first to comment!

Antione

17 days agoWade

24 days agoLeatha

1 month agoLashawnda

1 month agoAnabel

2 months agoRosio

2 months agoJanna

2 months agoYuriko

2 months agoSharita

3 months agoYun

3 months agoRosio

3 months agoCarlton

3 months agoKandis

4 months agoJunita

4 months agoBrandon

4 months agoCassi

4 months agoBambi

5 months agoBrock

5 months agoMing

5 months agoRosenda

6 months agoProvidencia

6 months agoLenna

6 months agoVictor

6 months agoJanet

6 months agoTrina

7 months agoErick

7 months agoJeniffer

8 months agoLeontine

8 months agoGoldie

10 months agoMose

11 months agoKirby

1 year agoLakeesha

1 year agoCandra

1 year agoBuck

1 year agoRomana

1 year agoTonette

1 year agoLouisa

1 year agoDenae

1 year agoHelga

1 year agoErick

1 year agoMollie

1 year agoAlaine

1 year agoFidelia

2 years agoMelinda

2 years agoFidelia

2 years agoTherese

2 years agoBoris

2 years agoRolland

2 years agoBeckie

2 years agoLai

2 years agoWenona

2 years agoIlene

2 years agoSophia

2 years agoCarry

2 years agoRoxanne

2 years agoSylvia

2 years agoMattie

2 years agoJacinta

2 years agoAntonio

2 years agoIlene

2 years ago