Microsoft DP-700 Exam Questions

Free Microsoft DP-700 Exam Actual Questions

Note: Premium Questions for DP-700 were last updated On Jun. 04, 2026 (see below)

You have a Fabric warehouse named DW1 that contains a Type 2 slowly changing dimension (SCD) dimension table named DimCustomer. DimCustomer contains 100 columns and 20 million rows. The columns are of various data types, including int, varchar, date, and varbinary.

You need to identify incoming changes to the table and update the records when there is a change. The solution must minimize resource consumption.

What should you use to identify changes to attributes?

Exhibit.

You have a Fabric workspace that contains a write-intensive warehouse named DW1. DW1 stores staging tables that are used to load a dimensional model. The tables are often read once, dropped, and then recreated to process new data.

You need to minimize the load time of DW1.

What should you do?

You have a Fabric warehouse named DW1 that contains a Type 2 slowly changing dimension (SCD) dimension table named DimCustomer. DimCustomer contains 100 columns and 20 million rows. The columns are of various data types, including int, varchar, date, and varbinary.

You need to identify incoming changes to the table and update the records when there is a change. The solution must minimize resource consumption.

What should you use to identify changes to attributes?

You have a Fabric workspace that contains an eventstream named EventStream1. EventStream1 outputs events to a table in a lakehouse.

You need to remove files that are older than seven days and are no longer in use.

Which command should you run?

VACUUM is used to clean up storage by removing files no longer in use by a Delta table. It removes old and unreferenced files from Delta tables. For example, to remove files older than 7 days:

VACUUM delta.`/path_to_table` RETAIN 7 HOURS;

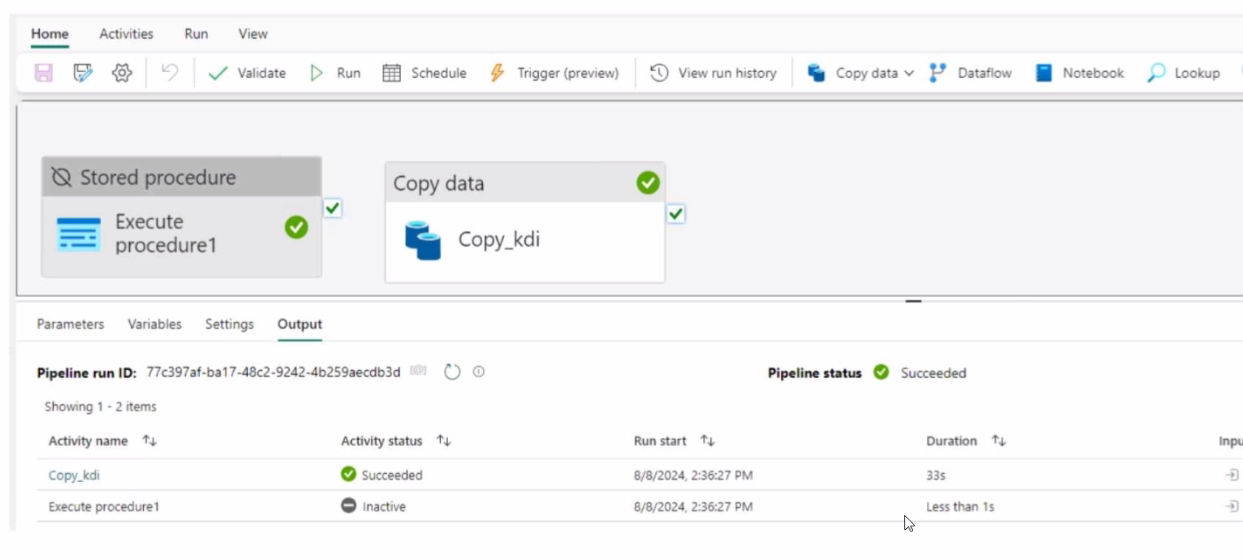

You have a Fabric workspace that contains a data pipeline named Pipeline! as shown in the exhibit.

- Select Question Types you want

- Set your Desired Pass Percentage

- Allocate Time (Hours : Minutes)

- Create Multiple Practice tests with Limited Questions

- Customer Support

Ronald Rivera

15 days agoDaniel Cooper

24 days agoHarold Perez

1 month agoSusan Hill

1 month agoTiffany Nguyen

1 month agoPatricia Turner

24 days agoAshley Adams

1 month agoCynthia Smith

20 days agoAnastacia

2 months agoChun

2 months agoTalia

2 months agoEveline

3 months agoMarylin

3 months agoSteffanie

3 months agoGlen

3 months agoNan

4 months agoDominga

4 months agoLaura

4 months agoMatthew

4 months agoCrista

5 months agoSue

5 months agoAnnelle

5 months agoErasmo

5 months agoNu

6 months agoIola

6 months agoLashunda

6 months agoHuey

6 months agoLenora

7 months agoRasheeda

7 months agoBrigette

7 months agoErinn

7 months agoRikki

8 months agoJillian

8 months agoBarrie

8 months agoColton

8 months agoYesenia

8 months agoLoreta

9 months agoMadonna

9 months agoMaryanne

12 months agoMaile

1 year agoAnnamaria

1 year agoTrina

1 year agoRebeca

1 year agoDortha

1 year agoNovella

1 year agoRaymon

1 year agoJennie

1 year agoTegan

1 year ago