Microsoft DP-600 Exam Questions

Free Microsoft DP-600 Exam Actual Questions

Note: Premium Questions for DP-600 were last updated On May. 17, 2026 (see below)

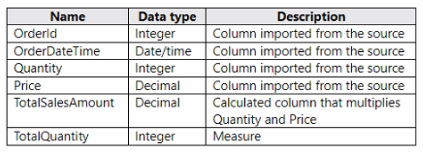

You have a Fabric tenant that contains a semantic model named Model1. Model1 uses Import mode. Model1 contains a table named Orders. Orders has 100 million rows and the following fields.

You need to reduce the memory used by Model! and the time it takes to refresh the model. Which two actions should you perform? Each correct answer presents part of the solution. NOTE: Each correct answer is worth one point.

To reduce memory usage and refresh time, splitting the OrderDateTime into separate date and time columns (A) can help optimize the model because date/time data types can be more memory-intensive than separate date and time columns. Moreover, replacing TotalSalesAmount with a measure (D) instead of a calculated column ensures that the calculation is performed at query time, which can reduce the size of the model as the value is not stored but calculated on the fly. Reference = The best practices for optimizing Power BI models are detailed in the Power BI documentation, which recommends using measures for calculations that don't need to be stored and adjusting data types to improve performance.

You have a Fabric tenant that contains a warehouse named DW1 and a lakehouse named LH1. DW1 contains a table named Sales.Product. LH1 contains a table named Sales.Orders.

You plan to schedule an automated process that will create a new point-in-time (PIT) table named Sales.ProductOrder in DW1. Sales.ProductOrder will be built by using the results of a query that will join Sales.Product and Sales.Orders.

You need to ensure that the types of columns in Sales. ProductOrder match the column types in the source tables. The solution must minimize the number of operations required to create the new table.

Which operation should you use?

You have a Fabric workspace named Workspace1.

Workspace1 contains multiple semantic models, including a model named Model1. Model1 is updated by using an XMLA endpoint.

You need to increase the speed of the write operations of the XMLA endpoint.

What should you do?

When using XMLA endpoints to manage and update semantic models in Microsoft Fabric, the performance of write operations (such as processing, structural changes, or metadata deployments from Tabular Editor) is directly influenced by the storage format and how the model is persisted.

Why Option A is Correct

By default, Fabric semantic models use the Small semantic model storage format.

To improve write operations performance through XMLA, you must change the workspace setting to use the Large semantic model storage format.

The large format uses more efficient storage techniques, supports partitioning, and handles larger models with optimized write capabilities.

This setting is applied at the workspace level and impacts all semantic models within that workspace, including Model1.

This is explicitly documented in Microsoft's guidance: Large semantic model storage format is required when using XMLA write operations for large or frequently updated models.

Why the Other Options Are Incorrect

B . Configure Model1 to use the Direct Lake storage format.

Direct Lake mode is designed for query performance (reading data directly from OneLake in delta format without import/duplication).

It improves query latency and freshness but does not improve XMLA write operations, which deal with model metadata and structural updates.

C . Delete any unused semantic models from Workspace1.

Deleting unused semantic models helps manage capacity and storage but does not increase the speed of XMLA endpoint write operations.

Workspace storage overhead does not directly impact the write throughput of XMLA operations.

D . Delete any unused columns from Model1.

Removing unused columns reduces the memory footprint and can improve query performance.

However, it does not directly improve the speed of XMLA write operations. The bottleneck in XMLA writes is tied to the storage format, not the model size alone.

Summary

To increase the speed of XMLA write operations on semantic models, you must enable the Large semantic model storage format at the workspace level. This setting ensures better handling of writes and metadata operations via the XMLA endpoint.

Reference

Large models in Power BI and Microsoft Fabric

Use the XMLA endpoint in Microsoft Fabric

You are creating a semantic model in Microsoft Power Bl Desktop.

You plan to make bulk changes to the model by using the Tabular Model Definition Language (TMDL) extension for Microsoft Visual Studio Code.

You need to save the semantic model to a file.

Which file format should you use?

When saving a semantic model to a file that can be edited using the Tabular Model Scripting Language (TMSL) extension for Visual Studio Code, the PBIX (Power BI Desktop) file format is the correct choice. The PBIX format contains the report, data model, and queries, and is the primary file format for editing in Power BI Desktop. Reference = Microsoft's documentation on Power BI file formats and Visual Studio Code provides further clarification on the usage of PBIX files.

You have a Microsoft Power Bl project that contains a semantic model. You plan to use Azure DevOps for version control.

You need to modify the .gitignore file to prevent the data values from the data sources from being pushed to the repository. Which file should you reference?

- Select Question Types you want

- Set your Desired Pass Percentage

- Allocate Time (Hours : Minutes)

- Create Multiple Practice tests with Limited Questions

- Customer Support

William Bailey

18 days agoKimberly Smith

30 days agoRobert Williams

23 days agoBetty Flores

9 days agoHarold Adams

26 days agoSandra Nguyen

16 days agoSharon Peterson

12 days agoAhmed

2 months agoDeonna

2 months agoJesus

2 months agoJerlene

2 months agoLorean

3 months agoKayleigh

3 months agoWilda

3 months agoHarley

3 months agoKimbery

4 months agoJosefa

4 months agoJade

4 months agoNathalie

4 months agoDustin

5 months agoDaren

5 months agoJennifer

5 months agoRaymon

5 months agoRoslyn

6 months agoCiara

6 months agoAileen

6 months agoMollie

6 months agoLinsey

7 months agoLayla

7 months agoDick

7 months agoBette

7 months agoTerrilyn

8 months agoStefania

8 months agoBeckie

8 months agoKendra

9 months agoRashida

9 months agoJudy

11 months agoDeane

12 months agoCarlee

1 year agoJacquelyne

1 year agoAndra

1 year agoLeandro

1 year agoGermaine

1 year agoAdela

1 year agoMerrilee

1 year agoReita

1 year agoTyisha

2 years agoLaquanda

2 years agoLeonor

2 years agoLeota

2 years agoLachelle

2 years agoFletcher

2 years agoMarla

2 years agoKrystal

2 years agoJacki

2 years agoValene

2 years agoLauran

2 years agoKaron

2 years agoMarge

2 years agoTamekia

2 years agoMary

2 years agoStephaine

2 years agoLing

2 years agoJonelle

2 years agoKerry

2 years agoNobuko

2 years agoalison

2 years agoAlex

2 years agopereexe

2 years agokorey

2 years agoAsuncion

2 years agoAlexas

2 years ago