Microsoft DP-200 Exam Questions

- Topic 1: Responsibility for data related tasks that include Ingesting/ Egressing/ and Transforming Data

- Topic 2: Multiple Sources Using Various Services and Tools

- Topic 3: Azure Data Engineer Collaborates With Business Stakeholders

- Topic 4: Identify and Meet Data Requirements While Designing and Implementing the Management

- Topic 5: Monitoring Security and Privacy of Data Using the Full Stack of Azure Services

Free Microsoft DP-200 Exam Actual Questions

Note: Premium Questions for DP-200 were last updated On 31-08-2021 (see below)

Use the following login credentials as needed:

Azure Username: xxxxx

Azure Password: xxxxx

The following information is for technical support purposes only:

Lab Instance: 10543936

You plan to enable Azure Multi-Factor Authentication (MFA).

You need to ensure that User1-10543936@ExamUsers.com can manage any databases hosted on an Azure SQL server named SQL10543936 by signing in using his Azure Active Directory (Azure AD) user account.

To complete this task, sign in to the Azure portal.

Use the following login credentials as needed:

Azure Username: xxxxx

Azure Password: xxxxx

The following information is for technical support purposes only:

Lab Instance: 10543936

You need to ensure that only the resources on a virtual network named VNET1 can access an Azure Storage account named storage10543936.

To complete this task, sign in to the Azure portal.

Use the following login credentials as needed:

Azure Username: xxxxx

Azure Password: xxxxx

The following information is for technical support purposes only:

Lab Instance: 10543936

You need to replicate db1 to a new Azure SQL server named db1-copy10543936 in the US West region.

To complete this task, sign in to the Azure portal.

Use the following login credentials as needed:

Azure Username: xxxxx

Azure Password: xxxxx

The following information is for technical support purposes only:

Lab Instance: 10543936

You need to ensure that you can recover any blob data from an Azure Storage account named storage10543936 up to 10 days after the data is deleted.

To complete this task, sign in to the Azure portal.

Note: This question is part of a series of questions that present the same scenario. Each question in the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution, while others might not have a correct solution.

After you answer a question in this scenario, you will NOT be able to return to it. As a result, these questions will not appear in the review screen.

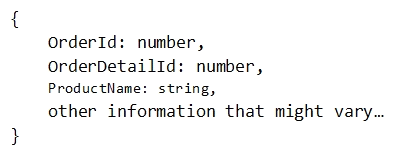

You have a container named Sales in an Azure Cosmos DB database. Sales has 120 GB of dat

a. Each entry in Sales has the following structure.

The partition key is set to the OrderId attribute.

Users report that when they perform queries that retrieve data by ProductName, the queries take longer than expected to complete.

You need to reduce the amount of time it takes to execute the problematic queries.

Solution: You increase the Request Units (RUs) for the database.

Does this meet the goal?

To scale the provisioned throughput for your application, you can increase or decrease the number of RUs at any time.

Note: The cost of all database operations is normalized by Azure Cosmos DB and is expressed by Request Units (or RUs, for short). You can think of RUs per second as the currency for throughput. RUs per second is a rate-based currency. It abstracts the system resources such as CPU, IOPS, and memory that are required to perform the database operations supported by Azure Cosmos DB.

https://docs.microsoft.com/en-us/azure/cosmos-db/request-units

- Select Question Types you want

- Set your Desired Pass Percentage

- Allocate Time (Hours : Minutes)

- Create Multiple Practice tests with Limited Questions

- Customer Support

Currently there are no comments in this discussion, be the first to comment!