Google Professional Machine Learning Engineer Exam Questions

- Google Cloud Certified Certifications

- Google Cloud Engineer Certifications

- Topic 1: Architecting low-code AI solutions: This section of the exam measures the skills of Google Machine Learning Engineers and covers developing machine learning models using BigQuery ML. It includes selecting appropriate models for business problems, such as linear and binary classification, regression, time series, matrix factorization, boosted trees, and autoencoders. Additionally, it involves feature engineering or selection and generating predictions using BigQuery ML.

- Topic 2: Collaborating within and across teams to manage data and models: It explores and processes organization-wide data including Apache Spark, Cloud Storage, Apache Hadoop, Cloud SQL, and Cloud Spanner. The topic also discusses using Jupyter Notebooks to model prototypes. Lastly, it discusses tracking and running ML experiments.

- Topic 3: Scaling prototypes into ML models: This topic covers building and training models. It also focuses on opting for suitable hardware for training.

- Topic 4: Serving and scaling models: This section deals with Batch and online inference, using frameworks such as XGBoost, and managing features using VertexAI.

- Topic 5: Automating and orchestrating ML pipelines: This topic focuses on developing end-to-end ML pipelines, automation of model retraining, and lastly tracking and auditing metadata.

- Topic 6: Monitoring ML solutions: It identifies risks to ML solutions. Moreover, the topic discusses monitoring, testing, and troubleshooting ML solutions.

Free Google Professional Machine Learning Engineer Exam Actual Questions

Note: Premium Questions for Professional Machine Learning Engineer were last updated On May. 01, 2026 (see below)

Your team has been tasked with creating an ML solution in Google Cloud to classify support requests for one of your platforms. You analyzed the requirements and decided to use TensorFlow to build the classifier so that you have full control of the model's code, serving, and deployment. You will use Kubeflow pipelines for the ML platform. To save time, you want to build on existing resources and use managed services instead of building a completely new model. How should you build the classifier?

Transfer learning is a technique that leverages the knowledge and weights of a pre-trained model and adapts them to a new task or domain1.Transfer learning can save time and resources by avoiding training a model from scratch, and can also improve the performance and generalization of the model by using a larger and more diverse dataset2.AI Platform provides several established text classification models that can be used for transfer learning, such as BERT, ALBERT, or XLNet3.These models are based on state-of-the-art natural language processing techniques and can handle various text classification tasks, such as sentiment analysis, topic classification, or spam detection4. By using one of these models on AI Platform, you can customize the model's code, serving, and deployment, and use Kubeflow pipelines for the ML platform. Therefore, using an established text classification model on AI Platform to perform transfer learning is the best option for this use case.

Transfer Learning - Machine Learning's Next Frontier

A Comprehensive Hands-on Guide to Transfer Learning with Real-World Applications in Deep Learning

Text classification models

Text Classification with Pre-trained Models in TensorFlow

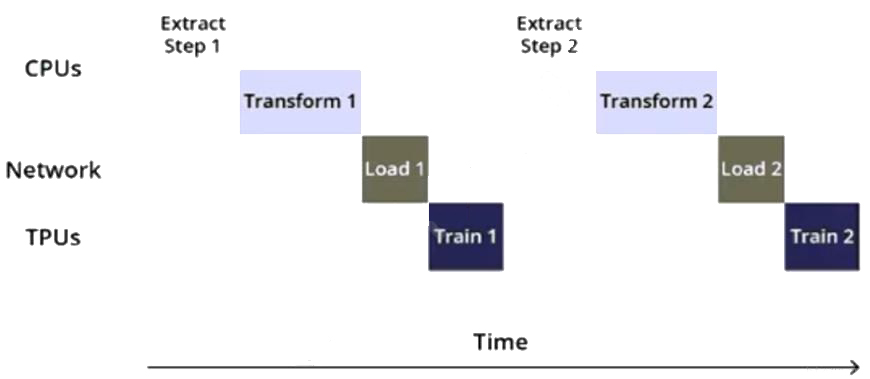

You are training an object detection model using a Cloud TPU v2. Training time is taking longer than expected. Based on this simplified trace obtained with a Cloud TPU profile, what action should you take to decrease training time in a cost-efficient way?

The trace in the question shows that the training time is taking longer than expected. This is likely due to the input function not being optimized. To decrease training time in a cost-efficient way, the best option is to rewrite the input function using parallel reads, parallel processing, and prefetch. This will allow the model to process the data more efficiently and decrease training time.Reference:

[Cloud TPU Performance Guide]

[Data input pipeline performance guide]

You work for a retail company. You have been asked to develop a model to predict whether a customer will purchase a product on a given day. Your team has processed the company's sales data, and created a table with the following rows:

* Customer_id

* Product_id

* Date

* Days_since_last_purchase (measured in days)

* Average_purchase_frequency (measured in 1/days)

* Purchase (binary class, if customer purchased product on the Date)

You need to interpret your models results for each individual prediction. What should you do?

According to the official exam guide1, one of the skills assessed in the exam is to ''explain the predictions of a trained model''.Vertex AI provides feature attributions using Shapley Values, a cooperative game theory algorithm that assigns credit to each feature in a model for a particular outcome2. Feature attributions can help you understand how the model calculates the predictions and debug or optimize the model accordingly.You can use AutoML for Tabular Data to generate and query local feature attributions3. The other options are not relevant or optimal for this scenario.Reference:

Professional ML Engineer Exam Guide

Feature attributions for classification and regression

AutoML for Tabular Data

Google Professional Machine Learning Certification Exam 2023

Latest Google Professional Machine Learning Engineer Actual Free Exam Questions

You have a large corpus of written support cases that can be classified into 3 separate categories: Technical Support, Billing Support, or Other Issues. You need to quickly build, test, and deploy a service that will automatically classify future written requests into one of the categories. How should you configure the pipeline?

AutoML Natural Language is a service that allows you to quickly build, test and deploy natural language processing (NLP) models without needing to have expertise in NLP or machine learning. You can use it to train a classifier on your corpus of written support cases, and then use the AutoML API to perform classification on new requests. Once the model is trained, it can be deployed as a REST API. This allows the classifier to be integrated into your pipeline and be easily consumed by other systems.

You are training and deploying updated versions of a regression model with tabular data by using Vertex Al Pipelines. Vertex Al Training Vertex Al Experiments and Vertex Al Endpoints. The model is deployed in a Vertex Al endpoint and your users call the model by using the Vertex Al endpoint. You want to receive an email when the feature data distribution changes significantly, so you can retrigger the training pipeline and deploy an updated version of your model What should you do?

Prediction drift is the change in the distribution of feature values or labels over time. It can affect the performance and accuracy of the model, and may require retraining or redeploying the model. Vertex AI Model Monitoring allows you to monitor prediction drift on your deployed models and endpoints, and set up alerts and notifications when the drift exceeds a certain threshold. You can specify an email address to receive the notifications, and use the information to retrigger the training pipeline and deploy an updated version of your model. This is the most direct and convenient way to achieve your goal.Reference:

Vertex AI Model Monitoring

Monitoring prediction drift

Setting up alerts and notifications

- Select Question Types you want

- Set your Desired Pass Percentage

- Allocate Time (Hours : Minutes)

- Create Multiple Practice tests with Limited Questions

- Customer Support

Emma Edwards

3 days agoJennifer Davis

15 days agoNathan Howard

5 days agoAdam King

11 days agoLinda Jones

4 days agoIsreal

1 month agoMerissa

1 month agoRene

2 months agoEun

2 months agoJamal

2 months agoSina

2 months agoSena

3 months agoKristeen

3 months agoYoko

3 months agoGlendora

3 months agoTarra

4 months agoErinn

4 months agoJimmie

4 months agoCherilyn

4 months agoGerri

5 months agoMattie

5 months agoEffie

5 months agoBong

5 months agoLacey

6 months agoEladia

6 months agoBette

6 months agoAntonio

6 months agoGail

7 months agoMollie

7 months agoLorenza

7 months agoMaryann

7 months agoTaryn

8 months agoRolande

8 months agoAntonio

8 months agoChu

8 months agoNickie

8 months agoLeonora

8 months agoLemuel

10 months agoLinette

10 months agoTamie

11 months agoNina

11 months agoYoko

1 year agoKenneth

1 year agoDaniel

1 year agoCasie

1 year agoGladys

1 year agoRessie

1 year agoRonnie

1 year agoClemencia

1 year agoMarta

1 year agoPenney

1 year agoTeddy

1 year agoStanford

1 year agoAngelyn

1 year agoJonell

1 year agoNickie

1 year agoNoe

1 year agoBlondell

1 year agoMurray

1 year agoChaya

2 years agoDorathy

2 years agoLenora

2 years agoCarey

2 years agoSage

2 years agoLura

2 years agoTheola

2 years agoSalina

2 years agoTheresia

2 years agoGeorgene

2 years agoBeth

2 years agoMargart

2 years agoThaddeus

2 years agoElfrieda

2 years agoJesse

2 years agoCaprice

2 years agoXochitl

2 years agopetal

2 years ago