Databricks Machine Learning Associate Exam Questions

- Topic 1: Databricks Machine Learning: It covers sub-topics of AutoML, Databricks Runtime, Feature Store, and MLflow.

- Topic 2: ML Workflows: The topic focuses on Exploratory Data Analysis, Feature Engineering, Training, Evaluation and Selection.

- Topic 3: Spark ML: It discusses the concepts of Distributed ML. Moreover, this topic covers Spark ML Modeling APIs, Hyperopt, Pandas API, Pandas UDFs, and Function APIs.

- Topic 4: Scaling ML Models: This topic covers Model Distribution and Ensembling Distribution.

Free Databricks Databricks Machine Learning Associate Exam Actual Questions

Note: Premium Questions for Databricks Machine Learning Associate were last updated On May. 27, 2026 (see below)

Which of the following tools can be used to distribute large-scale feature engineering without the use of a UDF or pandas Function API for machine learning pipelines?

Spark ML (Machine Learning Library) is designed specifically for handling large-scale data processing and machine learning tasks directly within Apache Spark. It provides tools and APIs for large-scale feature engineering without the need to rely on user-defined functions (UDFs) or pandas Function API, allowing for more scalable and efficient data transformations directly distributed across a Spark cluster. Unlike Keras, pandas, PyTorch, and scikit-learn, Spark ML operates natively in a distributed environment suitable for big data scenarios. Reference:

Spark MLlib documentation (Feature Engineering with Spark ML).

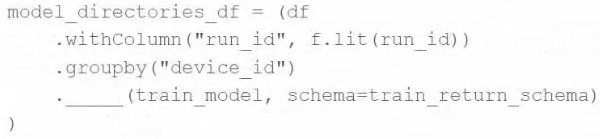

A machine learning engineer wants to parallelize the training of group-specific models using the Pandas Function API. They have developed the train_model function, and they want to apply it to each group of DataFrame df.

They have written the following incomplete code block:

Which of the following pieces of code can be used to fill in the above blank to complete the task?

The function mapInPandas in the PySpark DataFrame API allows for applying a function to each partition of the DataFrame. When working with grouped data, groupby followed by applyInPandas is the correct approach to apply a function to each group as a separate Pandas DataFrame. However, if the function should apply across each partition of the grouped data rather than on each individual group, mapInPandas would be utilized. Since the code snippet indicates the use of groupby, the intent seems to be to apply train_model on each group specifically, which aligns with applyInPandas. Thus, applyInPandas is a better fit to ensure that each group generated by groupby is processed through the train_model function, preserving the partitioning and grouping integrity.

Reference

PySpark Documentation on applying functions to grouped data: https://spark.apache.org/docs/latest/api/python/reference/api/pyspark.sql.GroupedData.applyInPandas.html

A data scientist has produced two models for a single machine learning problem. One of the models performs well when one of the features has a value of less than 5, and the other model performs well when the value of that feature is greater than or equal to 5. The data scientist decides to combine the two models into a single machine learning solution.

Which of the following terms is used to describe this combination of models?

Ensemble learning is a machine learning technique that involves combining several models to solve a particular problem. The scenario described fits the concept of ensemble learning, where two models, each performing well under different conditions, are combined to create a more robust model. This approach often leads to better performance as it combines the strengths of multiple models.

Reference

Introduction to Ensemble Learning: https://machinelearningmastery.com/ensemble-machine-learning-algorithms-python-scikit-learn/

Which of the following is a benefit of using vectorized pandas UDFs instead of standard PySpark UDFs?

Vectorized pandas UDFs, also known as Pandas UDFs, are a powerful feature in PySpark that allows for more efficient operations than standard UDFs. They operate by processing data in batches, utilizing vectorized operations that leverage pandas to perform operations on whole batches of data at once. This approach is much more efficient than processing data row by row as is typical with standard PySpark UDFs, which can significantly speed up the computation.

Reference

PySpark Documentation on UDFs: https://spark.apache.org/docs/latest/api/python/user_guide/sql/arrow_pandas.html#pandas-udfs-a-k-a-vectorized-udfs

A data scientist has a Spark DataFrame spark_df. They want to create a new Spark DataFrame that contains only the rows from spark_df where the value in column price is greater than 0.

Which of the following code blocks will accomplish this task?

To filter rows in a Spark DataFrame based on a condition, you use the filter method along with a column condition. The correct syntax in PySpark to accomplish this task is spark_df.filter(col('price') > 0), which filters the DataFrame to include only those rows where the value in the 'price' column is greater than 0. The col function is used to specify column-based operations. The other options provided either do not use correct Spark DataFrame syntax or are intended for different types of data manipulation frameworks like pandas. Reference:

PySpark DataFrame API documentation (Filtering DataFrames).

- Select Question Types you want

- Set your Desired Pass Percentage

- Allocate Time (Hours : Minutes)

- Create Multiple Practice tests with Limited Questions

- Customer Support

Matthew Stewart

2 hours agoNathan Walker

22 days agoPatricia Carter

1 month agoGeorge Morris

1 month agoMark Murphy

19 days agoSteven Stewart

16 days agoHeather Evans

13 days agoHerman

2 months agoCurtis

2 months agoMyra

2 months agoDesire

3 months agoGoldie

3 months agoChantay

3 months agoLorrie

4 months agoMargart

4 months agoAvery

4 months agoJaney

4 months agoNathan

5 months agoNikita

5 months agoJonell

5 months agoLorenza

5 months agoHarris

6 months agoOna

6 months agoVanda

6 months agoCharlene

6 months agoTimothy

7 months agoYen

7 months agoWynell

7 months agoSharika

7 months agoBrinda

8 months agoCathrine

8 months agoDeja

8 months agoDelpha

8 months agoMalcolm

9 months agoMarylyn

9 months agoFreeman

11 months agoEvangelina

1 year agoEdward

1 year agoShaquana

1 year agoKaitlyn

1 year agoRex

1 year agoPenney

1 year agoGlory

1 year agoBrande

1 year agoCammy

1 year agoSang

2 years agoGertude

2 years agoKattie

2 years agoAlishia

2 years agoShenika

2 years agoFelix

2 years agoDaren

2 years agoEarlean

2 years agoSusy

2 years agoDominga

2 years agoLouisa

2 years agoLashawn

2 years agoLynna

2 years agoVirgina

2 years agoMargot

2 years agoIsaac

2 years agoAmmie

2 years agoAnnmarie

2 years agoLinn

2 years agoCyndy

2 years agoSoledad

2 years ago